Build with iNAGO

From pilot to production deployment, guided by iNAGO experts.

netpeople Platform

Deploy and operate AI agents in real-world systems.

netpeople Starter

Turn documentation into deployable AI agents.

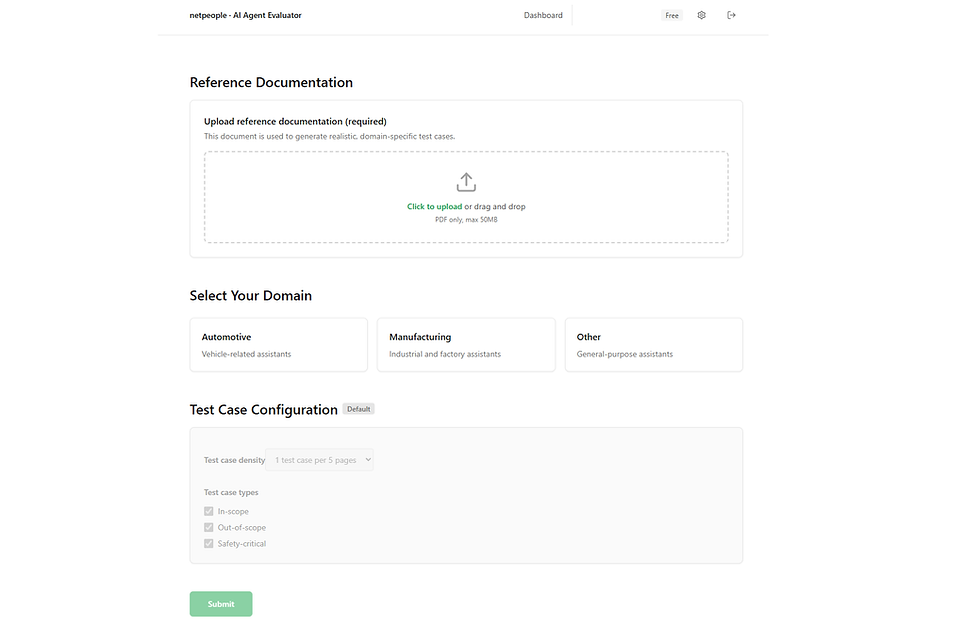

netpeople Agent Evaluator

Objective performance measurement across any AI system.

Build. Measure. Improve.

Products

AI That Sounds Right Can Still Be Wrong

Generative AI produces responses — but real systems require reliability.

Answers may appear correct while being incomplete, inconsistent, or misleading.

Without measurement, these issues remain hidden until they affect real users.

From Documentation to Measurable Insight

The netpeople Agent Evaluator measures AI performance against the knowledge it is built on.

It generates evaluation scenarios, analyzes responses, and provides objective insight into how your AI actually performs.

Evaluate AI in Real Scenarios

From Insight to Improvement

Deeper evaluation unlocks more precise optimization.

Standard Evaluation

Clear performance scoring across key metrics

Advanced Evaluation

Deeper analysis with expanded criteria and detailed breakdowns.

Optimized for

®

Direct connection to knowledge and logic — enabling continuous improvement.

From Insight to Optimization

Evaluation depth increases insight — and unlocks greater improvement.

The Measurement Layer in the netpeople AI Lifecycle

The Evaluator provides the measurement layer within the netpeople AI Lifecycle — connecting AI behavior to continuous improvement.

Without measurement, AI drifts.

With measurement, it improves.

From Insight to Improvement

Deeper evaluation unlocks more precise optimization.

Standard Evaluation

Clear performance scoring across key metrics

Advanced Evaluation

Deeper analysis with expanded criteria and detailed breakdowns.

Optimized for

®

Direct connection to knowledge and logic — enabling continuous improvement.

From Insight to Optimization

Evaluation depth increases insight — and unlocks greater improvement.